Like many (most?) scientists, I’ve often wondered what a world without Elsevier would look like. Not just Elsevier, mind you; really, the entire current structure of academic publishing, which revolves around a gatekeeper model where decisions about what gets published where are concentrated in the hands of a very few people (typically, an editor and two or three reviewers). The way scientists publish papers really hasn’t kept up with the pace of technology; the tools we have these days allow us, in theory, to build systems that support the immediate and open publication of scientific findings, which could then be publicly reviewed, collaboratively filtered, and quantitatively evaluated using all sorts of metrics that just aren’t available in a closed system.

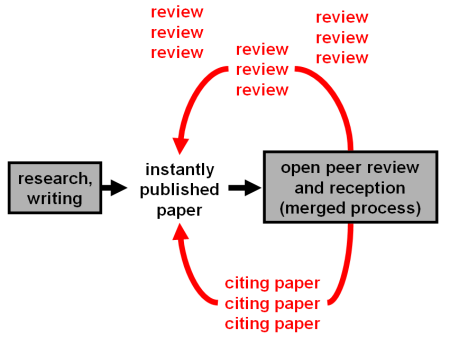

One particularly compelling vision is articulated by Niko Kriegeskorte, who presents a beautiful schematic of one potential approach to the future of academic publishing. I’m a big fan of Niko’s work (see e.g., this, this, or this)–almost everything he publishes is great, and his articles consistently feature absolutely stunning figures–and these ideas are no exception. The central motif, which I’m wholly sympathetic to, is to eliminate gatekeepers and promote open review and evaluation. Instead of a secret cabal small group of other researchers (and potential competitors) making behind-the-scenes decisions about whether to accept or reject your paper, you’d publish your findings online in a centralized repository as soon as you felt it was ready for prime time. At that point, the broader community of researchers would set about evaluating, rating, and commenting on your work. Crucially, all of the reviews would also be made public (either in signed or anonymous form), so that other researchers could evaluate not only the work itself, but also the responses to it. Reviews would therefore count as a form of publication, and one can then imagine all sorts of sophisticated metrics that could take into account not only the reception of one’s publications, but also the quality and nature of the reviews themselves, the quality of one’s own ratings of others’ work, and so on. Schematically, it looks like this:

Anyway, that’s just a cursory overview; Niko’s clearly put a lot of thought into developing a publishing architecture that overcomes the drawbacks of the current system while providing researchers with an incentive to participate (the sociological obstacles are arguably greater than the technical ones in this case). Well, at least in theory. Admittedly, it’s always easier to design a complex system on paper than to actually build it and make it work. But you have to start somewhere, and this seems like a pretty good place.

I note that a fairly good approximation of this would be to have everyone publish their work on their own Blogger or WordPress blog, with comments turned on…

I think that would be a start; but I think centralization is really important, because following blogs only really works for blogs you already know about. A centralized website that allowed tagging, searching, rating, and accumulation of user stats would simplify and streamline things considerably, while also giving people more incentive to review/comment. I don’t see many researchers taking the time to comment on individual blogs, but if reviews were visible to the community at large, and actually counted for something that could be readily quantified (e.g., number of reviews each user’s provided; average rating of those reviews by other members of the community, etc.), I think reviews/comments would be much more likely.

OK – instead of reviews in the form of comments, you could review in the form of blog posts that TrackBack to the original “paper”… so all of your work, and reviews, was right there on your blog, and to see the reviews on a “paper”, you’d just look at the list of TrackBacks…:)

I’m only semi-serious… but I do think it’s important to think about how something like this would be achieved, and in particular, how it would get started, because as you say the obstacles are sociological not technical. To my mind what you need is something that people can unilaterally adopt (i.e. by starting a blog) rather than having a centralized system that would require some degree of consensus (“We’re all going to use this system from now on”)…

The biggest sociological obstacle though is that not many people are going to unilaterally adopt anything like this, because the first few people to do it would look like weirdos, and would only seem far-sighted in retrospect. I think the only people who could “get away with it” are people with a well-established reputation (and tenure). But equally, it would only take a couple of such people to say “I’m giving up on journals, here’s my blog” to get the ball rolling…